By Tetiana Stoyko

- CTO & Co-Founder

How Voice Technology can upgrade your app? [Intro Guide]

Discovering the possibilities of Voice Technology, by exploring its major merits and installation process. To learn more, have a look at this article!

Perhaps you’ve heard about AI and Machine Learning’s popularity, as well as how these two techniques are addressing different markets as the future develops. Going further, as a part of AI and Machine Learning, there is Voice Technology, which is rising in demand. If you’ve been considering whether voice technology is worth paying attention to or if it’s just a trend, you’ll find this article useful. Even the most ardent doubters must admit that voice-based solutions are gaining popularity faster than many other advancements, which is highly questionable.

To dispel any doubts, let’s discover the statistics. According to projections, the number of voice assistants could hit 8.4 billion by 2024, surpassing the global population. Besides, Statista estimates that the worldwide voice recognition industry is expected to expand from 10.7 US$ in 2020 to 27.16 billion US$ in 2026. That being said, we can notice the huge expansion of the market, and that could trigger the majority of apps to correspond to the level and undertake an upgrade.

Surely, the cold figures are not enough to make a decision, so we are going to highlight a few valuable reasons to consider Voice technology, which are going to influence both users and your profit. So, let’s get to the point.

Major reasons to consider Voice Technology

Competitive advantage

Certainly, we mentioned that the number of voice assistants will increase to impossible heights, but it doesn’t mean that making your app a part of this number will be a bad idea. If you narrow down the industry you are going to fill in with the new app and conduct research on the competitors – you’ll be very pleased to see just a few of them or even none with voice assistants’ integration. Implementing new technologies, especially those that require the application of AI and Machine Learning, will definitely create a competitive advantage over the other apps. And even if you think that there is no way how you can integrate voice recognition – think harder. Voice ordering along with voice technology could facilitate any task and any feature for the user, whether it is a Transportation Management System (here you can add the possibility to administrate parcel delivery with the voice), or an Online Tutoring Platform (here users could schedule classes, and manage audio material with their voices).

24/7 Availability for Users

Users’ orders and queries can be responded to at any moment by sophisticated voice assistants. Furthermore, because AI can so closely mimic an intellect, many repetitious jobs may be successfully mechanized. As a result, voice technology may be the most cost-effective approach for a company to increase customer happiness while also growing its customer base.

As for another benefit, if you’ll extend the time spent in your app to 24/7, you’ll get the possibility to interact with them longer, which is equal to higher user engagement and leads to more profit.

Production efficiency

For the users, the efficiency starts right after they turn on the voice ordering/voice recognition. With that function, they can do several tasks at a time. This affects their satisfaction with the app and attracts more customers.

The other issue is that businesses are constantly seeking methods to improve their efficiency. As a result, numerous executives have begun to use voice technology for company management as well, by implementing it to the internal operations. With AI-trained voice assistants, the workflow happens faster, because multiple tasks could be conducted by the machine, with a simple order from an employee.

Customization

Voice technology offers data that can help you comprehend your target user. For example, you can look at how many times a specific type of voice search query led a person to your webpage. This provides you with information on the user’s browsing and purchasing habits. With such information, you can create better marketing strategies, and upgrade an app to fulfill the users’ demands. Which is obviously important for further app’s presence on the market range.

“Discover also how to create a Virtual Assistant like Siri”

Now, when you ascertained what voice technology could mean to your app, it’s time to acknowledge how to apply this innovation into your idea, and how to deploy it. For your convenience, we gathered below a simple guide for you. Just keep reading!

Getting started

First of all, you need to create an Amazon account on Alexa. After that, you can go to Developer Console and start creating your first Alexa skill. To do so, you need to decide on the skill name. The skill name could be everything, however, the creation implies to make it customized and unique, so there is no plagiarism on the market. For the example, we chose ‘Incora assistant’.

The next step is to choose a model between pre-built ones and custom made. Surely, it’s your choice, but we recommend picking up a Custom option and building it through your efforts. With that, you need to decide on a method to host your skills. You can use Alexa-hosted variants for personal training. Although for production, we recommend hosting the Lambda function on AWS, so in that case, you should select the block ‘Provision on your own’.

To get started, Alexa’s interface offers to choose a template for establishing backend code and interaction model. And once again, if you agreed upon creating a unique solution, click on the ‘Start from Scratch’ option.

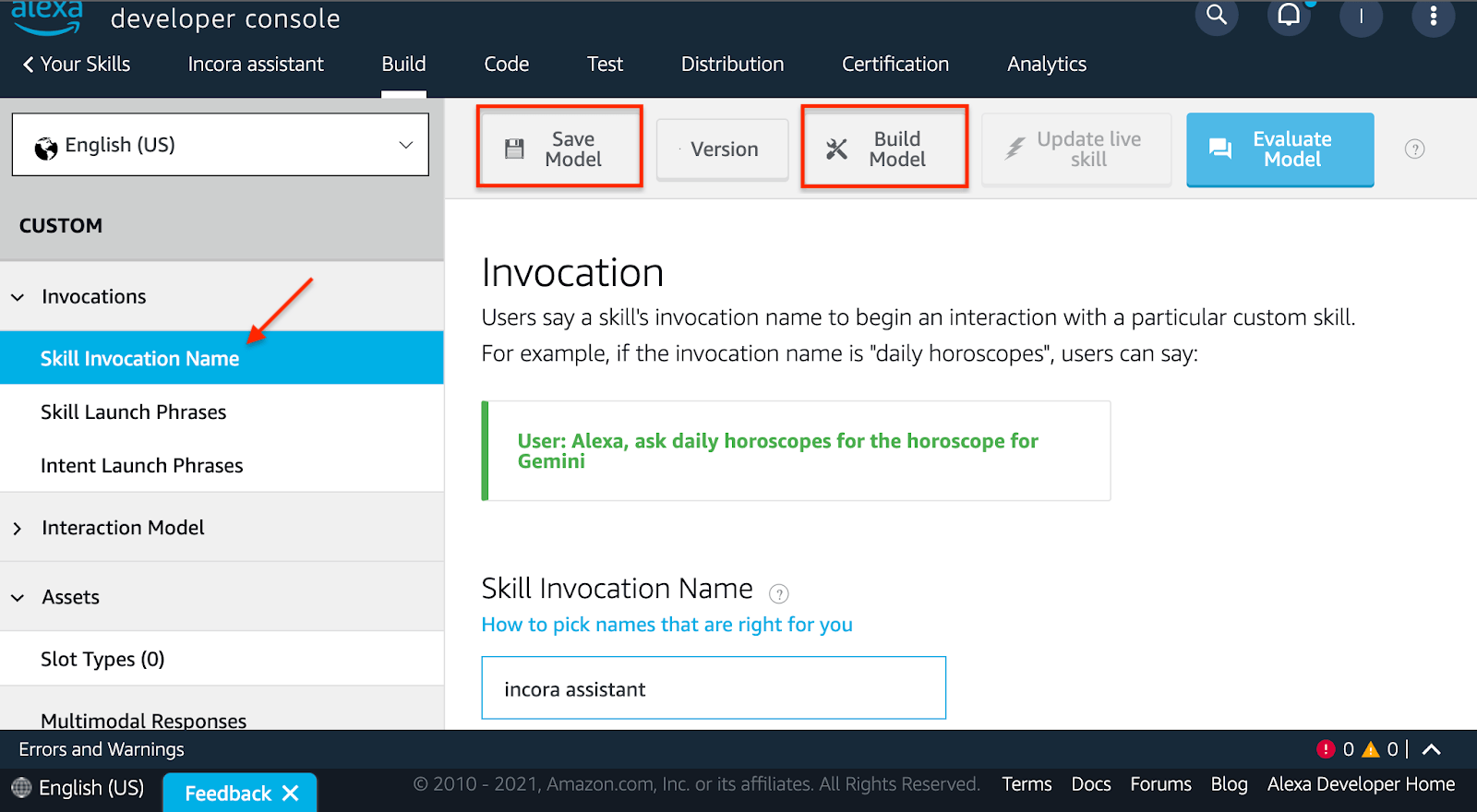

Finally, when the initial preparation is over, you can continue building your Voice Technology. Now, you need to figure out the Skill Invocation name. Make sure to find a phrase or a word, that will be easy to remember and spell for the user. Apparently, it could be similar to the Skill name.

When you are done, don’t forget to save and build the model after each change you made.

Click to expand

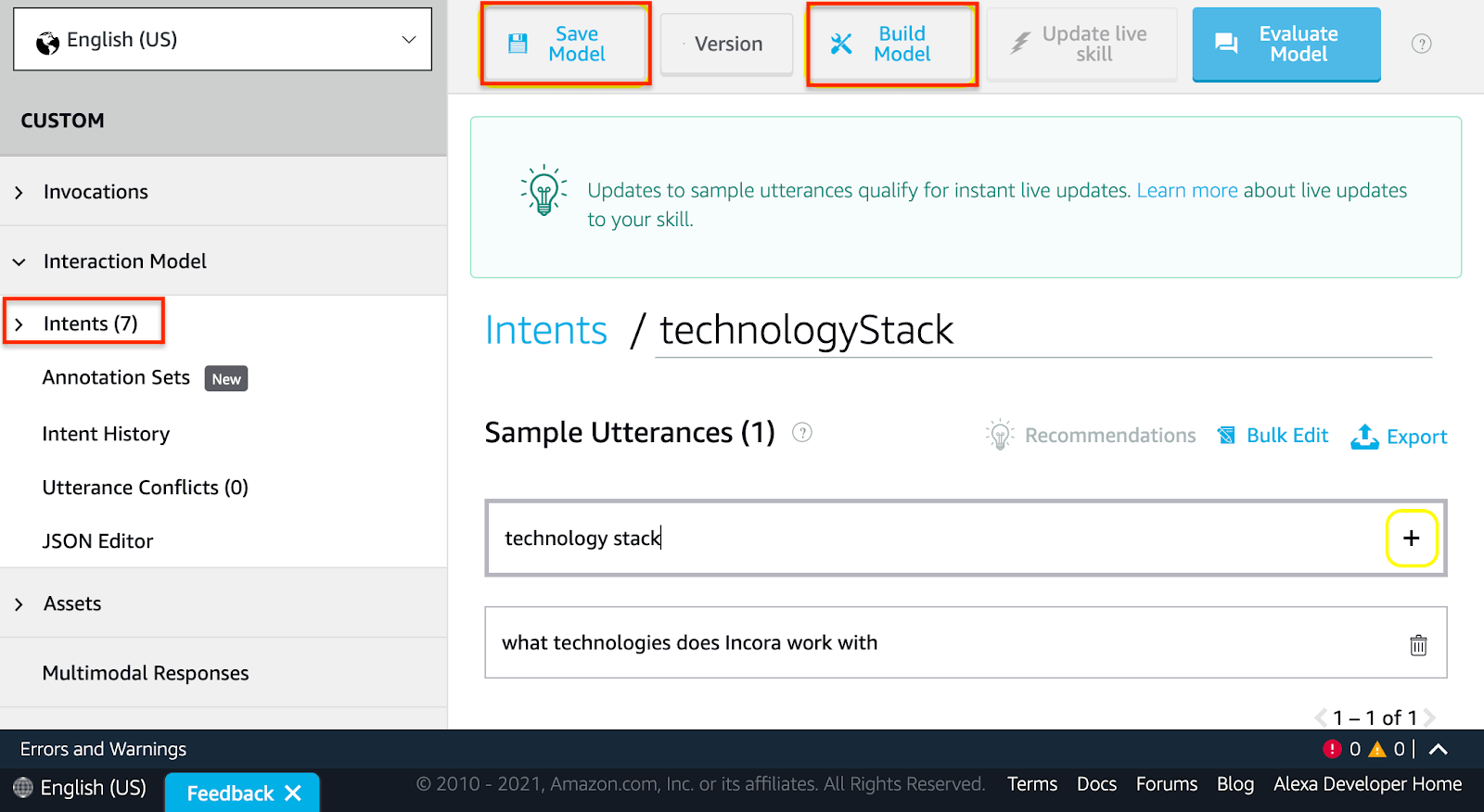

Click to expandHence, now you can create your own intents. An intent is an action that takes place in response to a user’s voiced request. In the sidebar go to ‘Interaction Model’ and then click on ‘Intents’. There you can create custom intents. Afterward, you should generate sample utterances. The sample utterances are a collection of plausible spoken sentences that have been aligned to the intents.

Click to expand

Click to expandThen create a Lambda function. To do this, we use Node.js, serverless, and Alexa ask-sdk.

Let’s start writing some code. We need to create handlers for standard Alexa intents, including several paths to the file with different requests. You can find them below.

src/handlers/LaunchRequestHandler.js

const LaunchRequestHandler = {

canHandle (handlerInput) {

return handlerInput.requestEnvelope.request.type === 'LaunchRequest'

},

handle (handlerInput) {

return handlerInput.responseBuilder.

speak('Welcome to Incora assistant. You can ask about technology stack, projects, and a lot more').

reprompt('What\'s your request? ').

getResponse()

}

}

module.exports = LaunchRequestHandler

src/handlers/HelpIntentHandler.js

const HelpIntentHandler = {

canHandle (handlerInput) {

return handlerInput.requestEnvelope.request.type === 'IntentRequest'

&& handlerInput.requestEnvelope.request.intent.name === 'AMAZON.HelpIntent'

},

handle (handlerInput) {

return handlerInput.responseBuilder.

speak('You can say: \'alexa, hello\'').

reprompt('What\'s your request? ').

getResponse()

}

}

module.exports = HelpIntentHandler

src/handlers/FallbackHandler.js

const FallbackHandler = {

canHandle (handlerInput) {

return handlerInput.requestEnvelope.request.type === 'IntentRequest'

},

handle (handlerInput) {

return handlerInput.responseBuilder.

speak('Can you repeat it, please? ').

reprompt('What\'s your request? ').

getResponse()

}

}

module.exports = FallbackHandler

src/handlers/ErrorHandler.js

const ErrorHandler = {

canHandle () {

return true

},

handle (handlerInput, error) {

console.log('ERROR HANDLED', error)

return handlerInput.responseBuilder.

speak('Sorry, I had trouble doing what you asked. Please try again. ').

reprompt( 'What\'s your request? ').

getResponse()

}

}

module.exports = ErrorHandler

src/handlers/CancelAndStopIntentHandler.js

const CancelAndStopIntentHandler = {

canHandle (handlerInput) {

return handlerInput.requestEnvelope.request.type === 'IntentRequest'

&& (handlerInput.requestEnvelope.request.intent.name === 'AMAZON.CancelIntent'

|| handlerInput.requestEnvelope.request.intent.name === 'AMAZON.StopIntent')

},

handle (handlerInput) {

return handlerInput.responseBuilder.

speak('Goodbye ').

getResponse()

}

}

module.exports = CancelAndStopIntentHandler

src/handlers/SessionEndedRequestHandler.js

const SessionEndedRequestHandler = {

canHandle (handlerInput) {

return handlerInput.requestEnvelope.request.type === 'SessionEndedRequest'

},

handle (handlerInput) {

// Any cleanup logic goes here.

return handlerInput.responseBuilder.getResponse()

}

}

module.exports = SessionEndedRequestHandler

Here you should add the custom handler for this intent such as ‘TechnologyStackIntentHandler’.

src/handlers/TechnologyStackIntentHandler.js

const TechnologyStackIntentHandler = {

canHandle (handlerInput) {

return handlerInput.requestEnvelope.request.type === 'IntentRequest'

&& handlerInput.requestEnvelope.request.intent.name === 'HelloWorldIntent'

},

handle (handlerInput) {

// add your own logic

// get data from some API, database, etc.

return handlerInput.responseBuilder.

speak('Our technology stack comprises JavaScript (Node, Angular, React, Ember, Vue), Python (Django), Mobile apps (React Native, Ionic).').

reprompt( 'What\'s your request? ').

getResponse()

}

}

module.exports = TechnologyStackIntentHandler

For the next step, you need to have or create an AWS account. Set AWS credentials on your local machine. Configure serverless.yml file and run yarn deploy or npm run deploy.

serverless.yml

service: alexa-lambda-example

plugins:

- serverless-pseudo-parameters

- serverless-iam-roles-per-function

provider:

name: aws

runtime: nodejs14.x

region: us-east-1

stage: prod

functions:

info:

handler: src/index.handler

events:

- alexaSkill: amzn1.ask.skill.XXXXXXXXX-XXXX-XXXX-XXXX-XXXXXXXXXXX

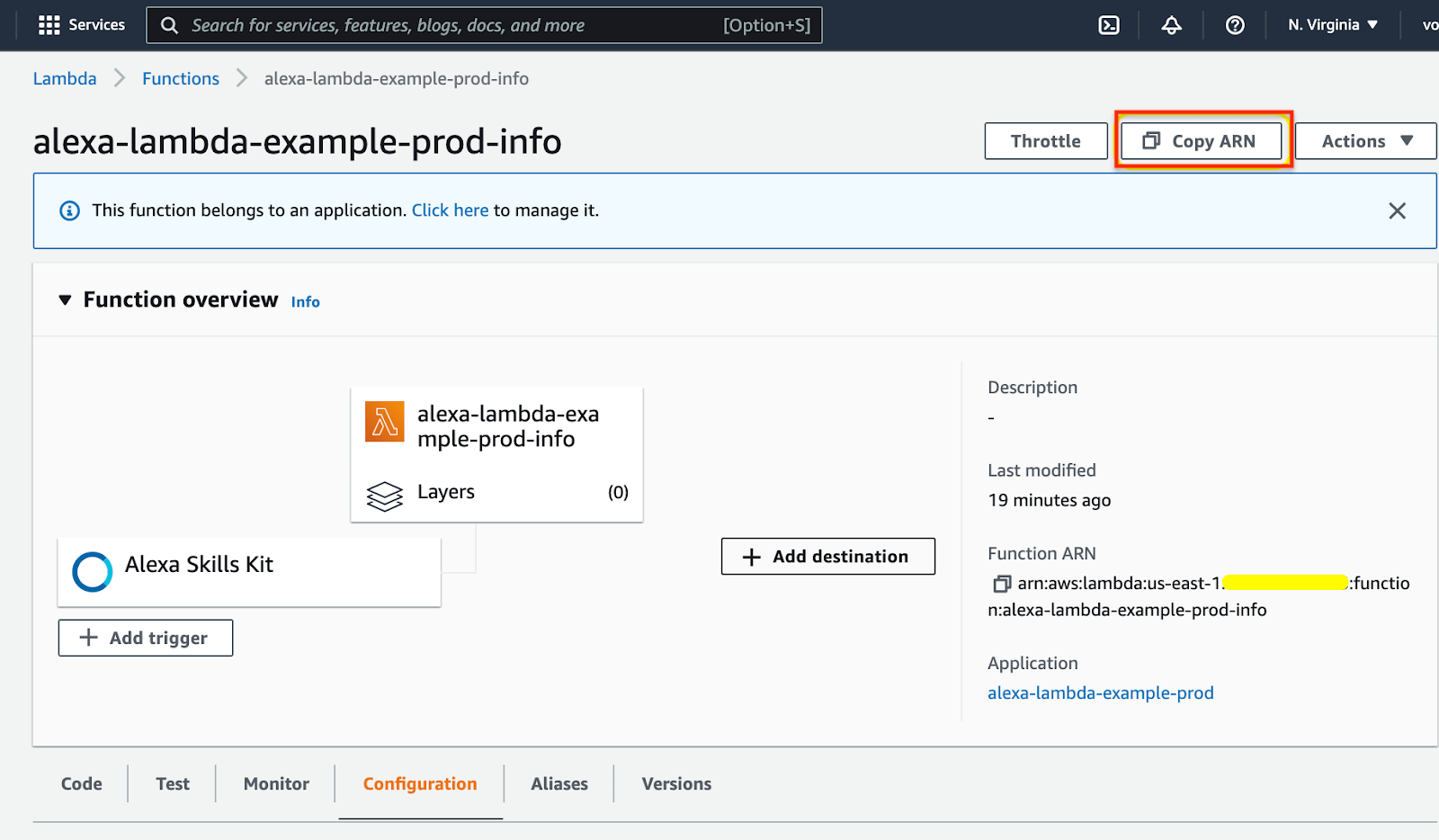

After that, you can go to the AWS lambda function and copy ARN.

Click to expand

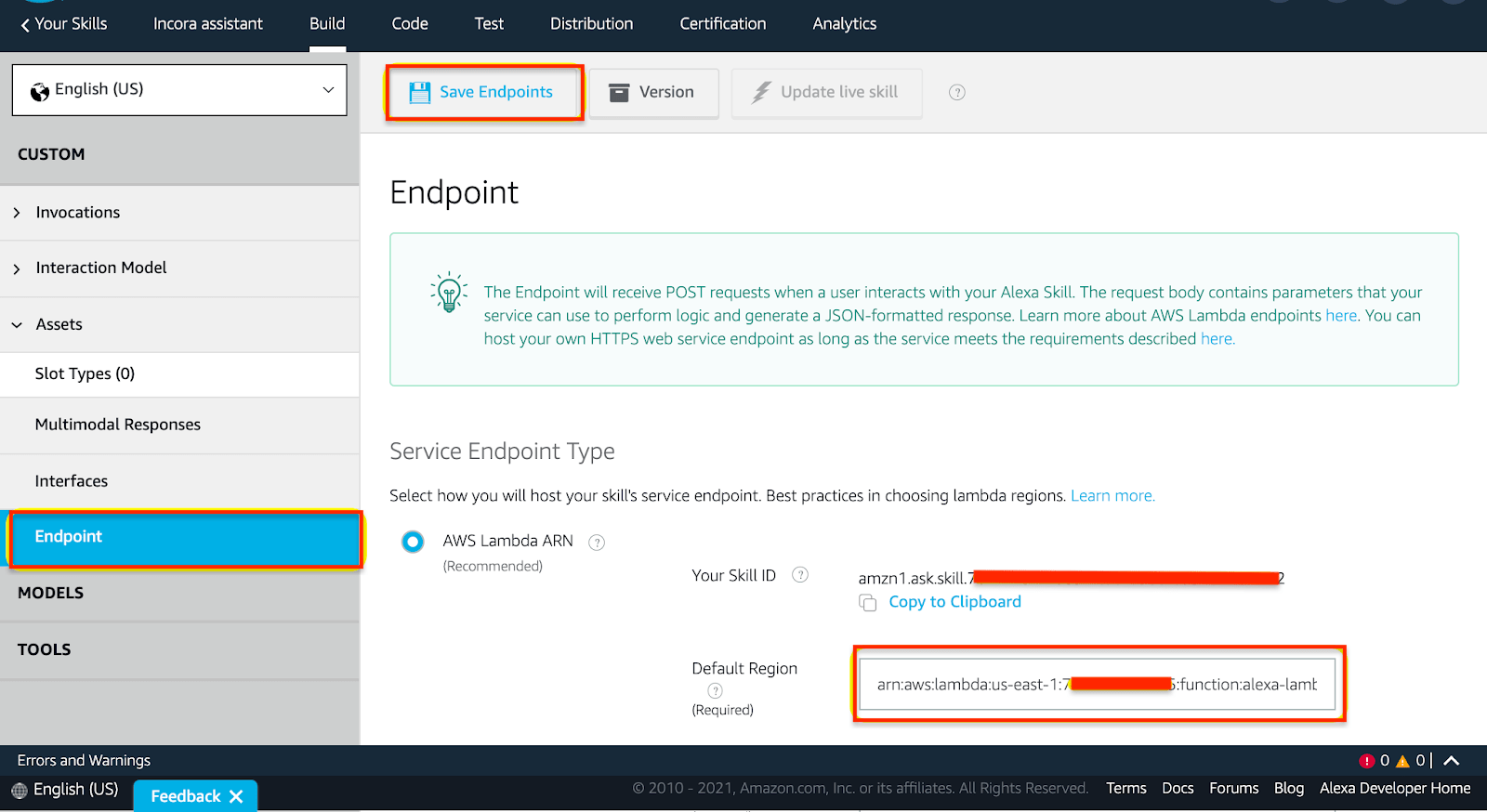

Click to expandGo to the Alexa developer console and set your AWS lambda ARN as an endpoint.

Click to expand

Click to expandFinally, your skill is built, and you can try to test it using the Alexa developer console or any Alexa device. Don’t forget to switch to development mode in ‘Test’ inside the navigation bar.

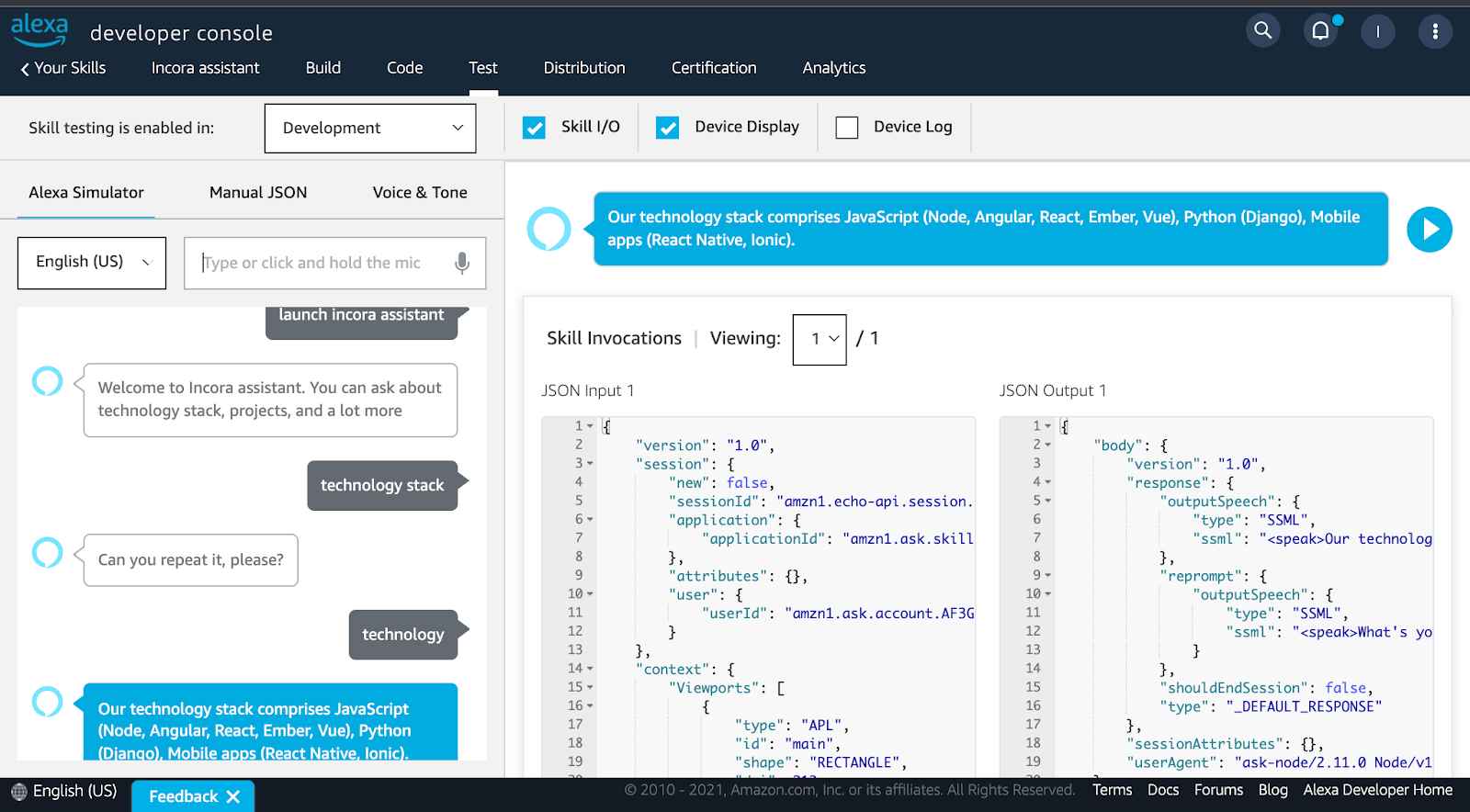

To test and provide assurance of the code, we deployed it to the developer console.

Click to expand

Click to expandAfter the testing stage, at long last, you get yourself a voice assistant. The next step would be just to integrate it into the hardware system.

Concluding

Voice technology is the future of each industry. The potential of voice recognition will only grow as AI and Machine Learning advance, bringing usefulness to the market. Voice technology introduces a completely new manner of communicating with clients and enhances their engagement.

Since our Incora team has the expertise of developing such kind of solution, we strive to hear more bold ideas from you, of how Voice Technology can be used in your particular case. Contact us, and let’s create a future together.

FAQ

YOU MAY ALSO LIKE

Get in Touch

Got no clue where to start? Why don’t we discuss your idea?

This site uses cookies to improve your user experience. Read our Privacy Policy

Accept